General

-

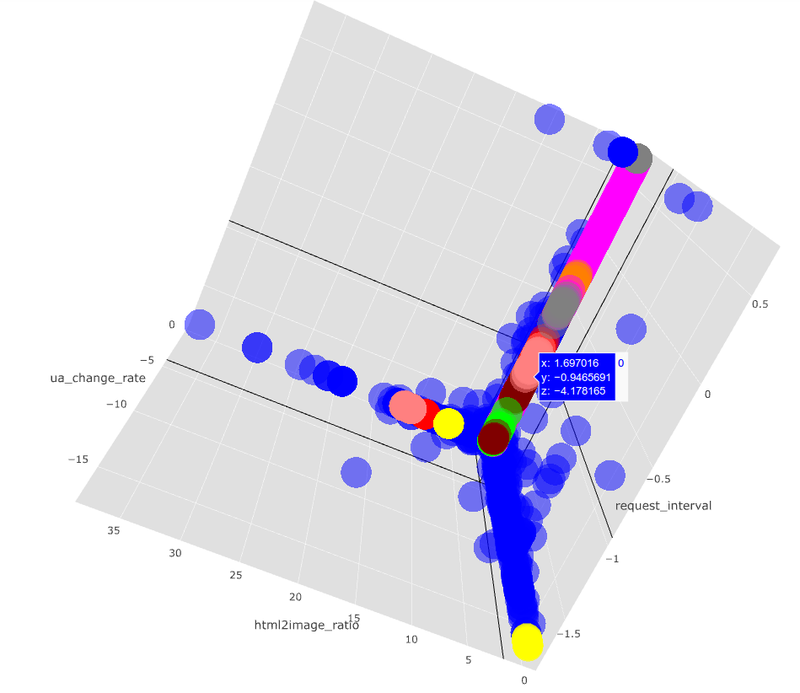

Distributed Deflect – project review

This is the fifth year of Deflect operations and an opportune time to draw some conclusions from the past and…

-

Creating a Hosts File Entry

If you wish to access your domain before your DNS has been updated, you can update your local ‘hosts file’,…

-

How to Flush Your Local DNS Resolver’s Cache

If your computer cannot reach a certain website this could be because your local DNS resolver’s cache contains an outdated…

-

Recommendations for Improving Your WordPress SEO

When it comes to search engine optimization (SEO), choosing the right WordPress theme framework becomes critical. Genesis does a great…

-

Choosing a Canonical Website Address

Canoni-what? Canonical is the word used to describe the one address that you want the world to go to when…

-

First Steps with Your eQPress Site

Your shiny new eQPress site is ready to go! Now what? Here are some recommendations. Enable “pretty” permalinks under “Settings”…

-

Learning WordPress

Here are some great resources for learning how to use WordPress. The Official WordPress User Manual – https://make.wordpress.org/support/user-manual/ This is…